Mistral Medium 3.5: 128B Open Model Tops SWE-Bench

Mistral's 128B dense model scores 77.6% on SWE-Bench Verified and ships with open weights. Here's what matters for developers.

The headline number: 77.6% on SWE-Bench Verified

Mistral just dropped Medium 3.5 — a 128-billion parameter dense model that scores 77.6% on SWE-Bench Verified, putting it at the top of the open-weight leaderboard for real-world coding tasks. This isn't a mixture-of-experts trick or a reasoning-only specialist. It's a single merged model handling instruction following, reasoning, coding, and multimodal inputs in one architecture.

The release landed with immediate traction: 500+ points on Hacker News and significant buzz on X. Developers hungry for open alternatives to closed coding models — Claude, GPT-4.x, Gemini — now have a self-hostable option that competes on the benchmark that matters most for agentic coding.

What makes Medium 3.5 different from previous Mistral releases

Mistral has historically shipped specialist models: Codestral for code, Mistral Large for general reasoning, Pixtral for vision. Medium 3.5 breaks that pattern. It's Mistral's first merged flagship — a single model trained to handle instruction, reasoning, and coding without needing separate specialized checkpoints.

Key specs from the announcement (per mistral.ai):

- Parameters: 128B dense (not MoE)

- Modality: Multimodal (text + vision)

- SWE-Bench Verified: 77.6%

- License: Modified MIT (open weights)

- Self-hosting: Runs on 4 GPUs

- Status: Public preview

The "modified MIT" license is worth noting. It's not the fully permissive Apache 2.0 that Meta uses for Llama, but it's substantially more open than anything from OpenAI, Anthropic, or Google. For enterprises that need to run models on their own infrastructure — for compliance, latency, or cost reasons — this is the gate that matters.

SWE-Bench context: what 77.6% actually means

SWE-Bench Verified is the gold standard for evaluating whether a model can actually fix real software bugs. It presents models with GitHub issues from popular Python repositories and asks them to generate patches that pass the project's test suite. It's hard — early GPT-4 scored in the low 30s.

For context on where 77.6% sits relative to the field:

| Model | SWE-Bench Verified | Open weights? |

|---|---|---|

| Mistral Medium 3.5 | 77.6% | Yes (modified MIT) |

| Devstral (Mistral's prior coding model) | ~46% (estimated from earlier reports) | Yes |

| Claude Sonnet 4 (with scaffolding) | ~72% (per Anthropic's published figures) | No |

| GPT-4.1 (OpenAI) | ~55% (per OpenAI's published figures) | No |

Important caveat: SWE-Bench scores depend heavily on scaffolding — the agent harness, retrieval setup, and retry logic wrapping the model. Mistral hasn't published full details on what scaffolding produced their 77.6% number. Until independent reproductions land (and they will — the HN crowd is already on it), treat this as a strong signal rather than a final verdict.

Remote agents: Vibe mode and Work mode in Le Chat

The model release is paired with two new agentic features in Le Chat (Mistral's consumer/developer chat interface):

Vibe mode

An async cloud-based coding agent. You describe what you want built, and Medium 3.5 works on it remotely — writing code, running it in a sandboxed environment, iterating on errors. Think of it as Mistral's answer to Claude's artifact generation or Cursor's background agents, but running entirely on Mistral's infrastructure.

Work mode

A broader agentic mode for non-coding tasks — research, document analysis, multi-step workflows. Details are thinner here, but the framing suggests Mistral is building toward the same "AI employee" positioning that OpenAI and Anthropic are pursuing with their agent products.

My read: the agent modes are the business play. Open weights get developers in the door; hosted agents on Le Chat are where Mistral actually makes money. It's the Red Hat model applied to AI — give away the engine, sell the service.

Self-hosting on 4 GPUs: what that means in practice

A 128B dense model at FP16 requires roughly 256GB of VRAM. Four A100-80GB cards give you 320GB — enough headroom for the model weights plus KV cache for modest context lengths. Four H100s would be more comfortable and give you faster inference.

Practical deployment scenarios:

- 4× A100 80GB: Minimum viable setup. Tight on memory for long contexts.

- 4× H100 80GB: Comfortable. Better throughput, room for longer sequences.

- 8× A100 40GB: Also works if that's what your cluster has.

- Quantized (AWQ/GPTQ): Could potentially fit on 2× H100 with INT4, but expect quality tradeoffs on the hardest coding tasks.

For comparison: Llama 3.1 405B needs 8+ H100s. Medium 3.5 at 128B is substantially more accessible for self-hosting while apparently competing on coding benchmarks. That's the density argument — and it's a strong one.

How this compares to the open-weight competition

The models Medium 3.5 most directly competes with:

- Qwen 2.5 72B / Qwen 3 (Alibaba): Strong on coding and math, widely deployed in Asia, but roughly half the parameter count. If Medium 3.5's 128B density buys meaningfully better coding performance, that's a differentiation worth paying for in VRAM.

- Llama 3.1 405B (Meta): Larger and more expensive to host. Generally strong but not specifically optimized for agentic coding workflows.

- DeepSeek V3 / R1 (DeepSeek): MoE architecture, very strong on reasoning. The active parameter count is lower per-token, making it potentially cheaper to serve, but the total model size is enormous.

- Devstral (Mistral's own): Medium 3.5 supersedes this for coding tasks. The jump from ~46% to 77.6% on SWE-Bench (if both are apples-to-apples) would be dramatic.

What we don't know yet

Honest gaps in the current information:

- Context window length: The announcement doesn't specify whether this is 32K, 128K, or something else. For agentic coding, context length matters enormously — you need to fit entire codebases in context for the agent to work effectively.

- Scaffolding details for SWE-Bench: What agent harness produced 77.6%? How many retries? What retrieval? Independent benchmarkers will answer this within days.

- Inference speed: Dense 128B models are inherently slower per-token than MoE architectures at the same quality level. No latency benchmarks published yet.

- Pricing on La Plateforme: Mistral hasn't announced per-token API pricing for Medium 3.5 at the time of writing.

- The "modified MIT" license specifics: What exactly is modified? Commercial use restrictions? Output ownership clauses? The devil is in the details for enterprise adoption.

Why this matters for the broader AI landscape

Three things I think are underappreciated here:

1. The open-weight coding gap is closing fast. Six months ago, the best open model on SWE-Bench was maybe 40-50%. Now we're at 77.6%. The closed-model moat for coding specifically is shrinking. If you're building an internal coding agent and you have the GPU budget to self-host, the "why not just use Claude/GPT?" argument gets weaker with each release like this.

2. Mistral is betting on density over scale. While Meta pushes to 405B+ and DeepSeek builds ever-larger MoE models, Mistral is trying to pack maximum capability into a 128B dense model that fits on a single node. This is a deliberate architectural bet — one that favors practical deployment over benchmark-chasing at any cost.

3. The "merged model" approach signals where the industry is heading. Instead of maintaining separate models for chat, code, reasoning, and vision, Mistral trained one model that does all of it. This is simpler to deploy, simpler to maintain, and — if the benchmarks hold — doesn't sacrifice much on any single axis. Expect other labs to follow this pattern.

Who should care

- Teams building internal coding agents: If you have 4+ A100/H100 GPUs available, Medium 3.5 gives you a self-hosted coding agent backbone that apparently rivals closed models. No API costs, no data leaving your network.

- Developers already using Le Chat: The Vibe and Work mode agents are the immediate consumer-facing upgrade. Worth trying on your next project.

- Anyone tracking the open vs. closed model debate: This is another data point that open weights can compete at the frontier for specific, high-value tasks.

- Investors and analysts: Mistral continues to punch above its weight relative to its funding. A European lab producing frontier-competitive coding models with a fraction of the compute budget of US hyperscalers is a meaningful signal about where AI capability is heading.

The bottom line

Mistral Medium 3.5 is the strongest open-weight coding model released to date — if the 77.6% SWE-Bench score holds up under independent evaluation. The merged architecture (instruction + reasoning + code + vision in one 128B dense model) is a clean design that's practical to deploy. The modified MIT license makes it accessible to most organizations.

The open questions — context length, exact license terms, inference latency, independent benchmark reproduction — will be answered within a week or two as the community gets its hands on the weights. For now, this is Mistral reminding everyone that open-weight models aren't just "good enough" alternatives to closed APIs. On coding specifically, they might be the best option available.

Keep reading

Anthropic's $1.8B Akamai Deal: Compute Strategy Explained

Anthropic signed a $1.8B, 7-year cloud deal with Akamai. Here's what it means for Claude's infrastructure and the AI compute race.

IREN's $3.4B NVIDIA Deal: AI Cloud Strategy Explained

IREN signed a $3.4B AI cloud deal with NVIDIA and a 5GW strategic partnership. Here's what it means for the neocloud race.

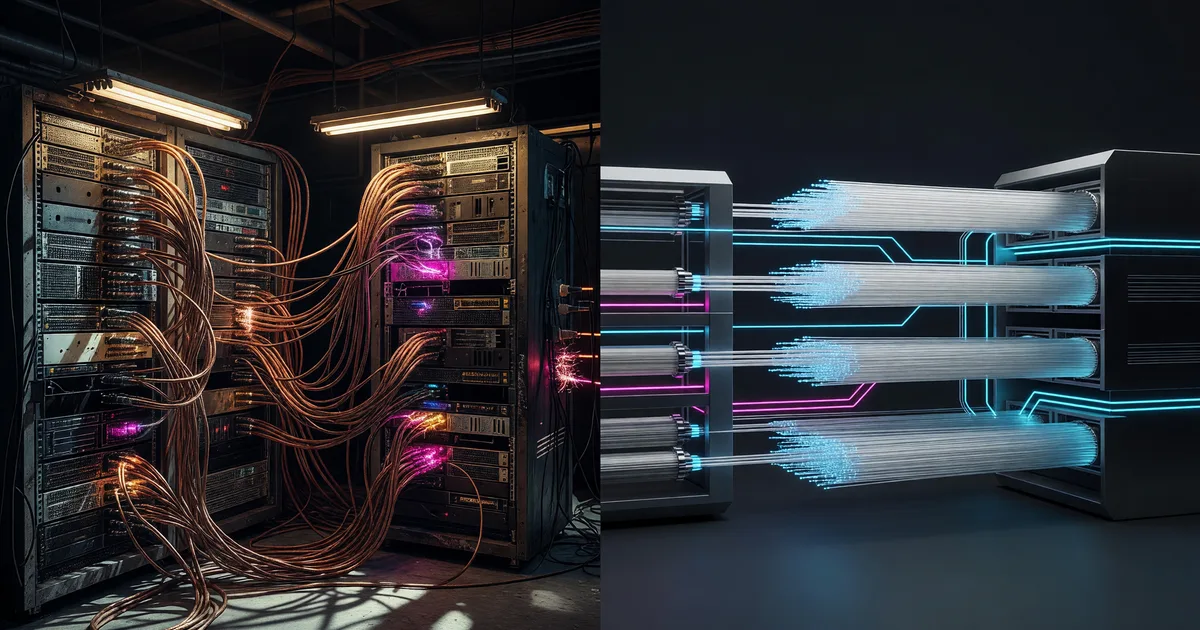

Nvidia's $3.2B Corning Deal: Why AI Needs Optics

Nvidia is investing up to $3.2B in Corning for AI optical infrastructure. Why glass fibers are replacing copper in GPU clusters.