Nvidia's $3.2B Corning Deal: Why AI Needs Optics

Nvidia is investing up to $3.2B in Corning for AI optical infrastructure. Why glass fibers are replacing copper in GPU clusters.

Nvidia Just Made a $3.2 Billion Bet on Glass

On May 6, 2026, Nvidia announced it would invest up to $3.2 billion in Corning — the company best known for Gorilla Glass on your phone — through a warrant structure tied to AI optical connectivity (per CNBC's reporting). Three new US factories. A 10x capacity increase. 3,000 new jobs. This isn't a vanity investment or a supply chain hedge. It's Nvidia telling the industry that copper interconnects are hitting a wall, and the next generation of AI infrastructure runs on light.

The deal is worth paying attention to not because of its size — $3.2B is a rounding error in the current AI infrastructure arms race — but because of what it signals about where the real bottleneck is moving. We've spent the last two years obsessing over GPU counts. The companies actually building these clusters are now obsessing over how data moves between those GPUs.

The Deal Structure: Warrants, Not a Check

An important nuance: Nvidia isn't writing Corning a $3.2 billion check. The investment comes through warrants — financial instruments that give Nvidia the right to purchase Corning shares at a set price, tied to Corning hitting manufacturing milestones for AI optical products. This structure does two things:

- Aligns incentives. Corning only gets the full benefit if it actually delivers the optical capacity Nvidia needs. No capacity, no warrant exercise.

- Locks in supply. Nvidia gets preferred access to Corning's expanded optical fiber and cable output — critical when every hyperscaler on Earth is trying to buy the same components.

The three new factories are planned for North Carolina and Texas, according to the CNBC report. That's a deliberate onshoring play that fits the broader pattern of AI infrastructure companies bringing manufacturing back to the US — partly for supply chain resilience, partly because the political environment rewards it.

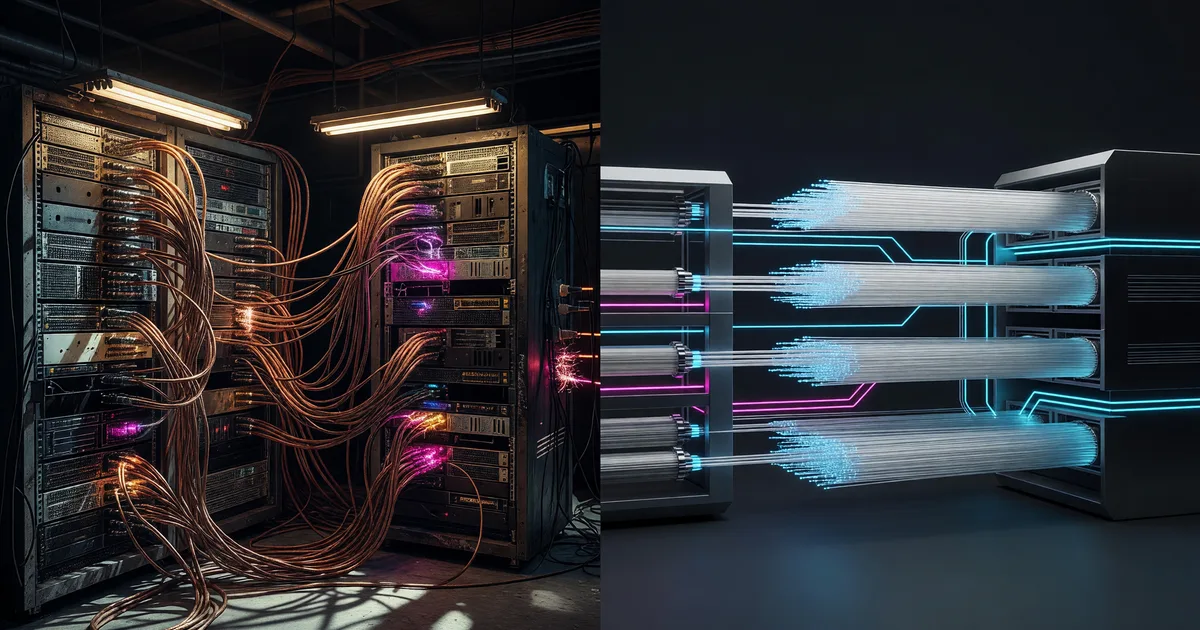

Copper vs. Optical: Why This Shift Matters Now

To understand why Nvidia cares this much about glass fibers, you need to understand the interconnect problem that's quietly becoming the defining constraint of large-scale AI training.

Current GPU clusters use copper cables for most short-range connections. Copper works fine at moderate scale — a few hundred GPUs communicating over NVLink or InfiniBand with copper interconnects is well-understood engineering. But as clusters push past 10,000, 50,000, or 100,000+ GPUs, copper starts failing in three ways:

| Factor | Copper | Optical Fiber |

|---|---|---|

| Power per bit transmitted | Higher — signal degrades, needs repeaters | Significantly lower at distance |

| Cable weight and density | Heavy, rigid, limits rack density | Thin, flexible, higher density |

| Maximum practical distance | ~5 meters at high bandwidth | Hundreds of meters to kilometers |

| Heat generation | Substantial — adds to cooling load | Minimal |

| Bandwidth scaling | Approaching physical limits | Room to grow with wavelength multiplexing |

The research context for this deal cites 5-20x power savings from co-packaged optics versus copper for data movement. That range is wide because it depends on distance and data rates, but the direction is unambiguous: at the scale Nvidia's customers are building, copper's power overhead becomes a serious problem.

My read: the GPU shortage narrative of 2023-2024 is giving way to an interconnect shortage narrative in 2026. You can have a million GPUs, but if they can't communicate efficiently, you don't have a cluster — you have a warehouse full of expensive space heaters.

What Co-Packaged Optics Actually Means

The term "co-packaged optics" (CPO) keeps showing up in AI infrastructure discussions, and it's worth explaining plainly. Traditional optical connections work like this: the GPU or switch has an electrical output, which travels through a short copper trace to a pluggable optical transceiver, which converts the electrical signal to light for fiber transmission.

Co-packaged optics eliminates the middle step. The optical components — lasers, modulators, photodetectors — are integrated directly onto the switch or processor package. Signal goes from silicon to light with minimal electrical path.

Why does this matter?

- Less power wasted. The electrical-to-optical conversion happens right at the chip instead of traveling through lossy copper first.

- Lower latency. Shorter path from compute to network means faster GPU-to-GPU communication.

- Higher bandwidth density. You can pack more optical channels into less space than copper cables allow.

Nvidia, Broadcom, Intel, and several startups are all racing toward CPO solutions. Corning's role in this ecosystem is manufacturing the glass — the optical fibers, waveguides, and connectors that the light actually travels through. Nvidia is essentially locking down the physical layer so its CPO designs have guaranteed material supply.

How This Compares to Other AI Infrastructure Deals

The Nvidia-Corning deal is part of a wave of massive AI infrastructure investments, but it targets a different layer of the stack than most. Here's how it stacks up:

| Deal | Amount | What It Buys | Layer |

|---|---|---|---|

| Nvidia → Corning (May 2026) | Up to $3.2B (warrants) | Optical fiber manufacturing capacity | Interconnect / physical |

| Anthropic ← SpaceX Colossus 1 (May 2026) | Undisclosed lease | 220K+ GPUs, 300MW facility | Compute |

| Microsoft AI infrastructure (2025-2026) | $80B+ announced | Data center construction globally | Facilities |

| Google custom TPU buildout | Multi-billion annually | Custom silicon + data centers | Compute + facilities |

What's different about the Corning deal is that Nvidia is investing downstream in its own supply chain. Microsoft and Google are building facilities to house GPUs. Anthropic is leasing compute. Nvidia is securing the physical materials that connect the GPUs it sells. It's a play by the company that makes the processors to also control the wiring between them.

I think this matters more than its dollar amount suggests. $3.2B in warrants sounds modest next to Microsoft's $80B+ data center commitments. But optical infrastructure is a genuine chokepoint. You can build a data center shell in months. Manufacturing specialty optical fiber at the scale AI demands takes years of factory buildout — which is exactly what Corning is doing with these three new plants.

The Power Equation Nobody Talks About

Here's what's underappreciated about the copper-to-optical transition: it's not just about bandwidth. It's about power budgets.

A modern AI data center might draw 100-300 megawatts. A meaningful fraction of that power — estimates vary, but networking and interconnect power consumption is a growing share — goes to moving data between GPUs rather than doing actual computation. Every watt spent on data movement is a watt not spent on matrix multiplication.

If optical interconnects deliver even the conservative end of the cited 5x power improvement over copper for data movement, that frees up significant power headroom. For a facility operator, that freed-up power translates directly into either more GPUs per facility or lower operating costs. Both are enormously valuable when power availability is itself a constraint — many hyperscalers are fighting over grid connections, negotiating with utilities, and even exploring nuclear power.

This is why Nvidia is willing to commit $3.2B to a glass company. Optical interconnects don't just improve performance — they change the economics of every AI data center Nvidia's GPUs go into.

The 10x Capacity Question

Corning has stated that the three new factories will increase its optical connectivity manufacturing capacity by 10x. That's an extraordinary scale-up, and it raises a question worth asking: is there really 10x demand coming?

The honest answer: we don't know yet, but the signals point to yes. Every major hyperscaler — Microsoft, Google, Amazon, Meta, Oracle — is building or expanding AI-focused data centers. xAI's Colossus facility in Memphis houses 200,000+ GPUs. Anthropic just leased a comparable cluster from SpaceX. These are all potential customers for AI-grade optical infrastructure.

The risk, of course, is that AI infrastructure buildout slows before Corning's expanded capacity comes online. If AI spending cools — or if a competitor develops a cheaper alternative to Corning's glass — the warrant structure gives Nvidia some protection, since it isn't committed to buying shares outright. But for Corning, the factories represent a real capital commitment.

What This Means for Nvidia's Strategy

Nvidia has spent the last several years evolving from "the GPU company" to "the AI infrastructure company." It designs GPUs (H100, B200, GB200), networking hardware (InfiniBand switches, NVLink), software stacks (CUDA, NeMo, NIMs), and now it's securing optical supply chains.

The pattern is vertical integration. Nvidia wants to sell complete data center solutions — not just chips, but the entire rack, the network connecting the racks, and now the glass carrying the signals between them. Each layer it controls is a layer competitors can't easily replicate.

This puts Nvidia in a different competitive position than AMD or Intel, who primarily sell processors and leave the data center design to customers. Nvidia is building something closer to what Apple does with consumer hardware: controlling enough of the stack that the integrated solution works better than any mix-and-match alternative.

Open Questions

A few things worth watching that aren't yet clear from the announcement:

- Exclusivity terms. Does the warrant structure give Nvidia exclusive access to a portion of Corning's output, or just preferred pricing and supply guarantees? The competitive implications are very different.

- Timeline to production. Building new factories takes time. When will the 10x capacity actually come online? The 3,000 jobs figure suggests significant construction, which typically means 18-36 months before full production.

- Impact on NVLink roadmap. Current NVLink generations use copper. If Nvidia is investing this heavily in optical, it likely plans to transition NVLink to optical in future GPU architectures. That's a major shift in how GPU clusters are physically built.

- Competitive response. Broadcom and Intel are also pursuing co-packaged optics. Will they make similar supply chain investments, or rely on the open market?

The Bottom Line

The Nvidia-Corning deal is easy to overlook in a world where $3.2 billion barely makes headlines anymore. But it's a signal worth paying attention to. The AI infrastructure race has moved past "who has the most GPUs" and into "who can make those GPUs actually work together at scale." Optical interconnects are the answer the industry is converging on, and Nvidia just locked down the supply chain for the physical layer.

For anyone tracking AI infrastructure investments — or trying to understand where the actual bottlenecks are — this deal says more about the next phase of AI scaling than another GPU count announcement ever could. The GPUs are the engine, but the glass is the nervous system. Nvidia is betting $3.2 billion that you can't have one without the other.

Keep reading

Anthropic's SpaceX Deal: 220K GPUs for Claude

Anthropic leased SpaceX's entire Colossus 1 cluster — 220K GPUs, 300MW. Here's what changes for Claude users.

Anthropic Mythos and Project Glasswing Explained

What Anthropic's restricted Mythos cyber model can actually do, why it's not public, and how to protect your own code right now.

Google Gemini 2.5: Everything You Need to Know

Complete guide to Google Gemini 2.5. Learn what's new, key features, how it compares to previous versions, pricing, and who it's best for.