Anthropic's $1.8B Akamai Deal: Compute Strategy Explained

Anthropic signed a $1.8B, 7-year cloud deal with Akamai. Here's what it means for Claude's infrastructure and the AI compute race.

Anthropic Just Signed a $1.8 Billion Cloud Deal With Akamai

Anthropic and Akamai announced a $1.8 billion, seven-year cloud computing deal on May 8, 2026 (per Bloomberg). Akamai stock surged 27% on the news. That reaction tells you something: this deal is transformative for Akamai, and it's a significant strategic move for Anthropic.

Days after Anthropic's lease of SpaceX's Colossus 1 cluster made headlines, the company made a second massive infrastructure bet — this time with a partner nobody in the AI world saw coming. Akamai is a content delivery network. It serves web traffic for a third of the internet. It is not the name you'd put on a shortlist of AI compute providers alongside AWS, Google Cloud, and Azure.

And that's exactly the point.

Why Akamai? The Unexpected Pick

Akamai has been quietly building out its cloud computing division over the past few years, primarily through its 2022 acquisition of Linode (a developer-focused cloud platform). The company operates data centers across 25+ countries with a network backbone originally designed for low-latency content delivery. That global footprint is valuable for AI inference — the part of the pipeline where a model like Claude actually processes your requests.

Training a frontier model requires a single massive cluster of GPUs in one location (that's what Colossus 1 is for). But serving millions of users requires distributed compute — inference nodes close to where users actually are. Akamai's global edge network is built for exactly that kind of distribution.

Training is centralized. Inference is distributed. Anthropic is now solving both problems with purpose-built partnerships.

My read: Anthropic didn't pick Akamai despite it being an unusual choice — they picked Akamai because of it. The CDN-turned-cloud-provider offers something AWS and Google Cloud can't: independence from companies that compete with Claude directly (Google with Gemini, Amazon with its Bedrock-first strategy and its own Nova models).

The Deal Terms: What We Know

Bloomberg's reporting gives us the key numbers:

- $1.8 billion total contract value over seven years

- Revenue for Akamai ramps starting Q4 2026, meaning infrastructure buildout is underway now

- The deal covers cloud computing services (not CDN — this is pure compute)

Neither company has disclosed the specific hardware involved — whether it's NVIDIA GPUs, custom silicon, or a mix. The Q4 2026 ramp timeline suggests Akamai is either provisioning new GPU clusters or retrofitting existing data center capacity to handle AI workloads. Seven years is a long commitment by AI industry standards, where the hardware cycle turns over every 18-24 months. That length signals Anthropic expects to need this capacity for the long haul and wants price certainty.

At roughly $257 million per year, this is a substantial but not enormous cloud spend for a company of Anthropic's scale — they reportedly hit $2 billion in annualized revenue in early 2026 (per multiple press reports). The deal is large enough to be strategically meaningful without putting all eggs in one basket.

Anthropic's Multi-Provider Compute Strategy

This is where the Akamai deal gets interesting — not in isolation, but as part of a pattern. Here's what Anthropic's compute portfolio now looks like:

| Provider | Role | Type | Scale |

|---|---|---|---|

| SpaceX / xAI (Colossus 1) | Dedicated training + inference cluster | Lease | 220K+ GPUs, 300 MW |

| Akamai | Distributed cloud compute (likely inference) | 7-year contract | $1.8B total value |

| Amazon Web Services | Primary cloud provider (historical) | Cloud partnership | Multi-billion (Amazon is also an investor) |

| Google Cloud | Secondary cloud provider | Cloud partnership | Undisclosed (Google is also an investor) |

Four major compute relationships. No single provider dominates. This is a deliberate multi-cloud hedging strategy, and it's notably different from how other frontier labs operate:

- OpenAI is deeply coupled to Microsoft Azure. Their compute relationship is their financial relationship — Microsoft's $13B+ investment came with Azure as the exclusive cloud provider. OpenAI can't easily diversify without renegotiating the core deal.

- Google DeepMind runs on Google's own TPU infrastructure. No diversification needed (or possible) — they are the compute provider.

- xAI builds its own clusters (Colossus 1 and 2) and now leases surplus to others. Vertically integrated.

- Meta AI operates on Meta's own GPU clusters. Same vertical integration as Google.

Anthropic is the only major frontier lab building a multi-provider compute moat without owning any infrastructure itself. That's either brilliant flexibility or a structural vulnerability, depending on how you look at it.

The Bull Case: Why This Strategy Is Smart

The strongest argument for Anthropic's approach is negotiating leverage. When you rely on one cloud provider, that provider sets the terms. When you have four providers competing for your workloads, you set the terms.

Consider the incentive dynamics:

- AWS knows Anthropic can shift workloads to Akamai or Colossus 1, which keeps pricing competitive

- Akamai gets a flagship AI customer that validates its cloud ambitions (hence the 27% stock jump — per Bloomberg, May 8)

- SpaceX/xAI monetizes idle hardware that would otherwise depreciate

- Google Cloud maintains its investment relationship but doesn't get exclusivity

There's also a resilience argument. If any single provider has an outage, capacity crunch, or pricing dispute, Anthropic can reroute. For a company whose product needs to be available 24/7 for enterprise customers, this redundancy matters.

And there's the independence argument. Both Amazon and Google sell competing AI models. Anthropic relying exclusively on either one creates an uncomfortable dependency on companies that have every incentive to favor their own products. Akamai doesn't have a competing AI model. That neutrality has real value.

The Bear Case: What Could Go Wrong

Multi-provider strategies sound great in theory. In practice, they introduce real complexity:

- Operational overhead. Running AI workloads across four different infrastructure providers means four different hardware configurations, networking stacks, and failure modes. Anthropic's engineering team has to optimize Claude's inference for each environment, which is nontrivial.

- Akamai is unproven at AI scale. Akamai has never hosted a frontier AI model's inference workloads. Their cloud division (built on the Linode acquisition) is credible for general compute, but AI workloads — especially at the scale Claude requires — demand specialized GPU clusters, high-bandwidth interconnects, and cooling infrastructure that Akamai hasn't publicly demonstrated. The Q4 2026 ramp gives them time, but this is a genuine open question.

- Seven years is a long bet. The AI hardware landscape in 2033 will look nothing like 2026. Locking in a seven-year contract means Anthropic is betting that Akamai's infrastructure will remain competitive as NVIDIA ships new GPU generations and custom AI chips mature. That's a lot of trust in a company still proving itself in cloud compute.

- Capital allocation. $1.8 billion committed to Akamai is $1.8 billion not spent on building owned infrastructure. At some point, the lease-vs-own math may tip toward Anthropic building its own data centers — as Meta, Google, and xAI all have. If that inflection comes before the seven years are up, the Akamai deal becomes an expensive legacy contract.

What the Akamai Stock Surge Tells You

Akamai's 27% stock jump on the news (per Bloomberg) is one of the most telling data points here. A $1.8B deal over seven years works out to roughly $257 million per year. Akamai's annual revenue is around $3.8 billion. So this deal adds roughly 7% to annual revenue — meaningful, but not transformative enough to justify a 27% market cap increase on its own.

The market isn't just pricing in the Anthropic contract. It's pricing in Akamai becoming an AI infrastructure company. If Akamai can land one frontier AI lab, it can land more. The Anthropic deal is validation — proof that AI workloads can run on Akamai's platform at enterprise scale. That opens the door to every mid-tier AI company that wants an alternative to AWS, GCP, and Azure but doesn't have the leverage to lease a 220,000-GPU supercomputer.

I think this is the underappreciated angle of the deal. It's not just about Anthropic diversifying compute — it's about a new entrant emerging in the AI cloud market. If Akamai delivers for Claude, they become a credible fourth option for AI workloads alongside the Big Three hyperscalers.

What This Means for Claude Users

The honest answer: probably nothing you'll notice immediately. The Akamai capacity doesn't come online until Q4 2026, and the Colossus 1 cluster from the SpaceX deal is already addressing Claude's most acute capacity constraints (doubled Claude Code limits, higher Opus rate caps, reduced peak-hour throttling — all of which shipped in early May, per Anthropic's announcements).

The longer-term impact is about sustained capacity growth. Claude's user base is growing rapidly, and Anthropic needs infrastructure that scales ahead of demand, not behind it. The Akamai deal is the Q4 2026 and beyond answer — additional inference capacity that comes online just as the Colossus 1 boost starts to feel normal rather than generous.

If Akamai's global edge network is used for inference distribution, users outside the US might eventually see lower latency. Akamai operates data centers in Europe, Asia-Pacific, and Latin America. Running Claude inference closer to end users — rather than routing everything through US-based clusters — would meaningfully improve response times for international users. Anthropic hasn't confirmed this is the plan, but it's the logical use of Akamai's network topology.

The Compute Wars Are Just Getting Started

In the past week alone:

- Anthropic signed two major compute deals (SpaceX Colossus 1 + Akamai) worth billions combined

- Nvidia invested $3.2 billion in Corning for AI optical infrastructure

- Microsoft/OpenAI continue building toward the Stargate supercomputer

- xAI is expanding Colossus 2 while monetizing Colossus 1

Every major player is making massive, long-term infrastructure bets. The frontier labs that locked in compute early will be able to train bigger models, serve more users, and iterate faster. The ones that didn't will be stuck in cloud waitlists.

Anthropic's strategy — no owned infrastructure, maximum provider diversity — is the most unconventional of the bunch. Whether it proves to be the smartest or the most fragile depends on execution. But the Akamai deal shows Anthropic isn't just reacting to compute scarcity. They're building a deliberate, diversified supply chain for intelligence.

That's either the most important strategic decision in AI right now, or a really expensive way to avoid building your own data centers. We'll know which by 2028.

Keep reading

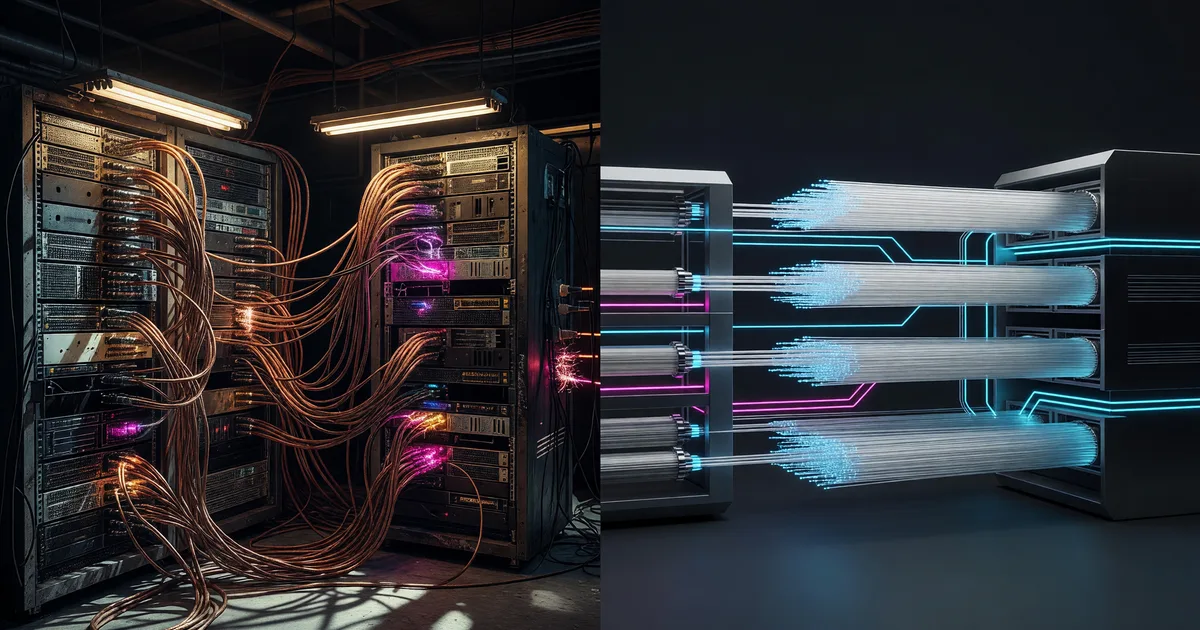

Nvidia's $3.2B Corning Deal: Why AI Needs Optics

Nvidia is investing up to $3.2B in Corning for AI optical infrastructure. Why glass fibers are replacing copper in GPU clusters.

Sierra's $950M Raise: Enterprise AI Agents Explained

Sierra just raised $950M at a $15B valuation. Here's what Bret Taylor's enterprise AI agent platform actually does and why it matters.

Anthropic's SpaceX Deal: 220K GPUs for Claude

Anthropic leased SpaceX's entire Colossus 1 cluster — 220K GPUs, 300MW. Here's what changes for Claude users.