Meta Llama

Meta's open-weight LLM family. Llama 4 brings mixture-of-experts, native multimodal input, and up to 10M token context — all free to download and deploy.

Pricing verified 2026-05-02

Overview

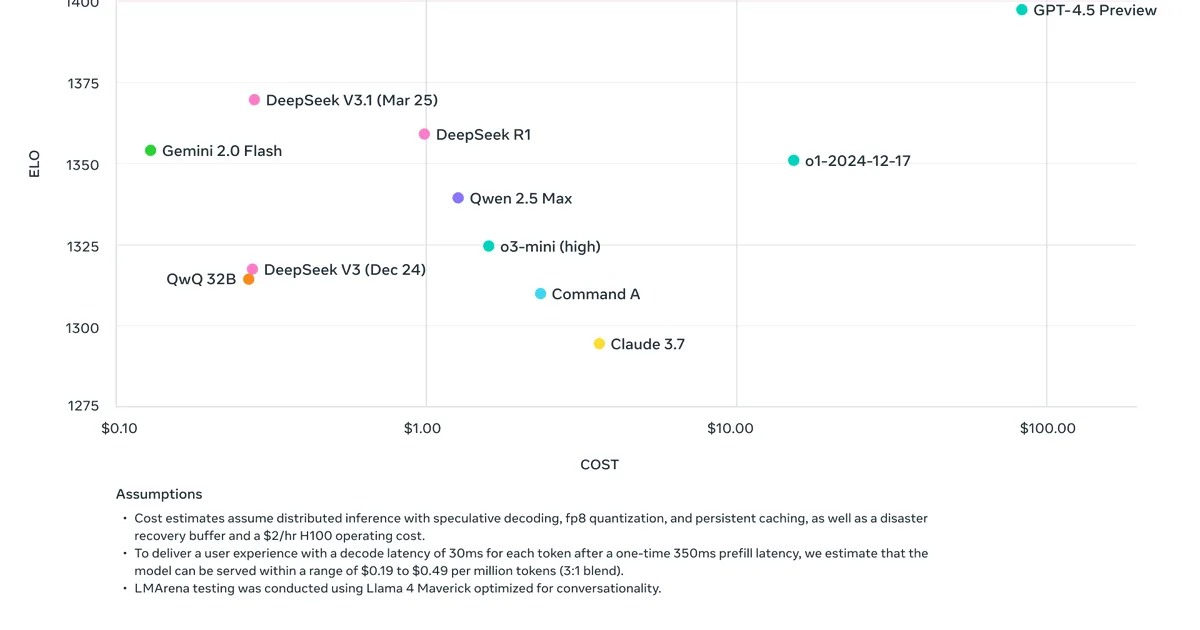

Meta's Llama 4 family represents the strongest open-weight models available, built on a mixture-of-experts (MoE) architecture that keeps inference efficient despite massive total parameter counts. Llama 4 Scout (17B active / 109B total parameters, 16 experts) handles up to 10 million tokens of context — the longest of any production model — while Llama 4 Maverick (17B active / 400B total, 128 experts) targets quality-critical tasks with benchmark scores competitive with GPT-4o and Gemini 2.5 Pro. Both models natively process text and images without bolted-on adapters.

The ecosystem around Llama is what truly sets it apart. Thousands of fine-tuned variants exist on Hugging Face for every niche imaginable — medical, legal, code, multilingual. You can run quantized Llama 4 Scout on a single high-end GPU, or deploy Maverick across a small cluster. Every major cloud provider (AWS Bedrock, Azure AI, Google Cloud, Together, Groq, Fireworks) offers Llama 4 as a managed API, typically at a fraction of closed-model pricing. Meta AI at meta.ai also provides a free consumer chatbot powered by Llama 4 with web search, image generation, and integration across WhatsApp, Instagram, and Messenger.

The trade-off is clear: Llama gives you control and cost savings, but you're responsible for the infrastructure, guardrails, and tooling that closed-source providers bundle in. For teams with ML engineering capability, it's often the best dollar-for-quality option. For individuals wanting a polished chat experience, the Meta AI chatbot works but doesn't match ChatGPT or Claude in UX refinement.

Key features

Llama 4 MoE Architecture

Mixture-of-experts design activates only 17B parameters per token while drawing on up to 400B total. This delivers frontier-class quality at a fraction of the compute cost of dense models of equivalent capability.

Native Multimodal

Llama 4 models process images and text natively — no separate vision adapter. Supports image understanding, chart reading, document analysis, and visual question answering out of the box.

10M Token Context

Llama 4 Scout supports up to 10 million tokens of context, the longest of any production model. Maverick supports 1M tokens. Both enable processing entire codebases, book-length documents, or extended conversation histories.

Open Weights & Fine-tuning

Download full model weights under the Llama Community License. Fine-tune with LoRA, QLoRA, or full-parameter training on your own data. Run quantized versions locally on consumer GPUs or deploy at scale on any cloud.

Pricing

Free tier: Completely free when self-hosted. Meta AI chatbot is free for consumer use.

| Plan | Price | What's included |

|---|---|---|

| Self-hosted | Free | Download and run on your own hardware. Quantized Scout runs on a single 24GB GPU; Maverick needs multi-GPU setup. |

| Meta AI Chatbot | Free | Consumer chatbot at meta.ai with web search, image gen, and social platform integration. No API access. |

| Cloud APIs | ~$0.10–0.90/M tokens | Available on AWS Bedrock, Azure, Google Cloud, Together, Groq, Fireworks, and others. Pricing varies by provider and model size. |

Download and run on your own hardware. Quantized Scout runs on a single 24GB GPU; Maverick needs multi-GPU setup.

Consumer chatbot at meta.ai with web search, image gen, and social platform integration. No API access.

Available on AWS Bedrock, Azure, Google Cloud, Together, Groq, Fireworks, and others. Pricing varies by provider and model size.

Pros & cons

Pros

- ✓Fully open weights — download, modify, and deploy without per-query costs

- ✓Llama 4 Scout offers 10M token context, the longest available in any production model

- ✓MoE architecture delivers strong quality at much lower inference cost than dense models

- ✓Massive ecosystem of fine-tuned variants, tooling, and cloud provider support

- ✓Meta AI chatbot provides free consumer access across WhatsApp, Instagram, and Messenger

Cons

- ×Still trails top closed models (Claude Opus 4, GPT-4.5) on complex reasoning and nuanced writing

- ×Running larger models locally requires serious hardware — Maverick needs multi-GPU setups

- ×No official desktop app or IDE integration; consumer experience limited to Meta AI chatbot

- ×Llama Community License restricts companies with 700M+ monthly active users