GPT-5.5

OpenAI's most capable frontier model, built for complex multi-step reasoning, agentic tool use, and deep coding tasks. Powers ChatGPT and Codex with up to 272K context.

Overview

GPT-5.5 is OpenAI's strongest model to date, designed from the ground up for real work rather than demos. It launched in late April 2026 and immediately became the default backbone for ChatGPT Plus, Team, and Enterprise users, while also powering the Codex agent for autonomous software engineering tasks.

What separates GPT-5.5 from GPT-5 and earlier models is its focus on agentic capability. It doesn't just answer questions — it plans multi-step workflows, uses tools (code execution, web browsing, file handling), self-corrects when things go wrong, and completes complex goals with minimal hand-holding. The 272K context window means it can ingest large codebases, lengthy documents, or extended conversation histories without losing track.

For developers specifically, GPT-5.5 sets new benchmarks on SWE-bench and coding evaluations, and the Codex integration lets it autonomously write, test, and iterate on pull requests. The API pricing at $5/$30 per million tokens (input/output) is steep but reflects the model's position as a premium reasoning engine. OpenAI also rolled out GPT-5.5-Cyber, a specialized variant tuned for cybersecurity analysis.

Key features

Frontier Model

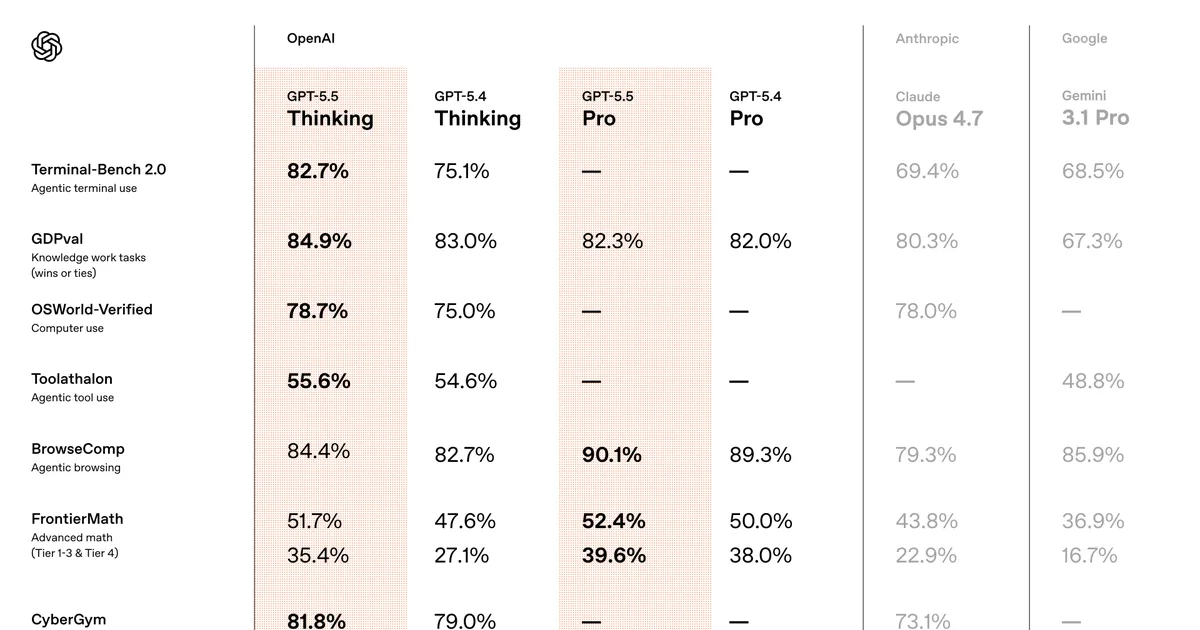

OpenAI's most capable model with state-of-the-art reasoning, instruction following, and code generation. Tops benchmarks on SWE-bench, GPQA, and multi-step problem solving.

Agentic

Built for autonomous multi-step workflows — plans tasks, calls tools (code interpreter, browser, file I/O), self-corrects, and completes complex goals without constant prompting.

272K Context

272K token context window lets you feed entire repositories, long documents, or extended conversations. Supports structured output, function calling, and vision.

Pricing

Free tier: No free API tier. Free ChatGPT users get GPT-4o, not GPT-5.5.

| Plan | Price | What's included |

|---|---|---|

| ChatGPT Plus | $20/mo | Access to GPT-5.5 as default model, Codex agent, standard rate limits |

| ChatGPT Pro | $200/mo | Highest rate limits, priority access, extended Codex usage |

| API (<=272K) | $5/$30 per 1M tokens | $5 input / $30 output per million tokens, function calling, structured output, vision |

| API (>272K) | Higher rates | Extended context pricing for inputs exceeding 272K tokens |

Access to GPT-5.5 as default model, Codex agent, standard rate limits

Highest rate limits, priority access, extended Codex usage

$5 input / $30 output per million tokens, function calling, structured output, vision

Extended context pricing for inputs exceeding 272K tokens

Pros & cons

Pros

- ✓Top-of-class coding and reasoning benchmarks — measurably ahead of GPT-5 and competitive alternatives

- ✓True agentic capability with tool use, self-correction, and multi-step task completion

- ✓272K context handles entire codebases without chunking workarounds

- ✓Codex integration turns it into an autonomous software engineer inside ChatGPT

Cons

- ×API pricing is premium — $30/M output tokens adds up fast for heavy usage

- ×No free tier for the API; ChatGPT Free users are stuck on GPT-4o

- ×Slower inference than lighter models like GPT-4o-mini for simple tasks

- ×Closed-source with no self-hosting option — full vendor lock-in to OpenAI

How it compares

| Tool | Best for | Pricing | Score |

|---|---|---|---|

| GPT-5.5 | — | API: $5/$30 per 1M tokens (in/out). ChatGPT Plus $20/mo, Pro $200/mo | 9.4/10 |

| Cursor | — | Freemium | 9.5/10 |

| Windsurf | — | Freemium | 9.1/10 |

| v0 by Vercel | — | Free tier + Premium $20/mo | 9/10 |